The OpenClaw exploitation wave marks a pivotal moment in AI security. Within 72 hours of its viral adoption in January 2026, multiple hacking groups began weaponizing vulnerabilities in the autonomous AI framework to steal API keys, deploy info-stealing malware, and execute remote shell commands across exposed systems.

More than 30,000 compromised instances have already been observed in active abuse campaigns.

For CISOs, SOC analysts, DevOps engineers, and AI security architects, this is not just another vulnerability story — it is a warning about the rapidly expanding attack surface of autonomous AI agents.

In this in-depth analysis, we break down:

- What OpenClaw is and why it became a target

- The CVEs and supply chain tactics used

- Real-world attack campaigns like ClawHavoc

- Business and compliance risks

- Actionable mitigation strategies

What Is OpenClaw and Why Is It High Risk?

4

OpenClaw (formerly MoltBot and ClawdBot) is an open-source autonomous AI framework developed by Peter Steinberger. Its rapid popularity in early 2026 led to widespread deployment across startups, research labs, and enterprise test environments.

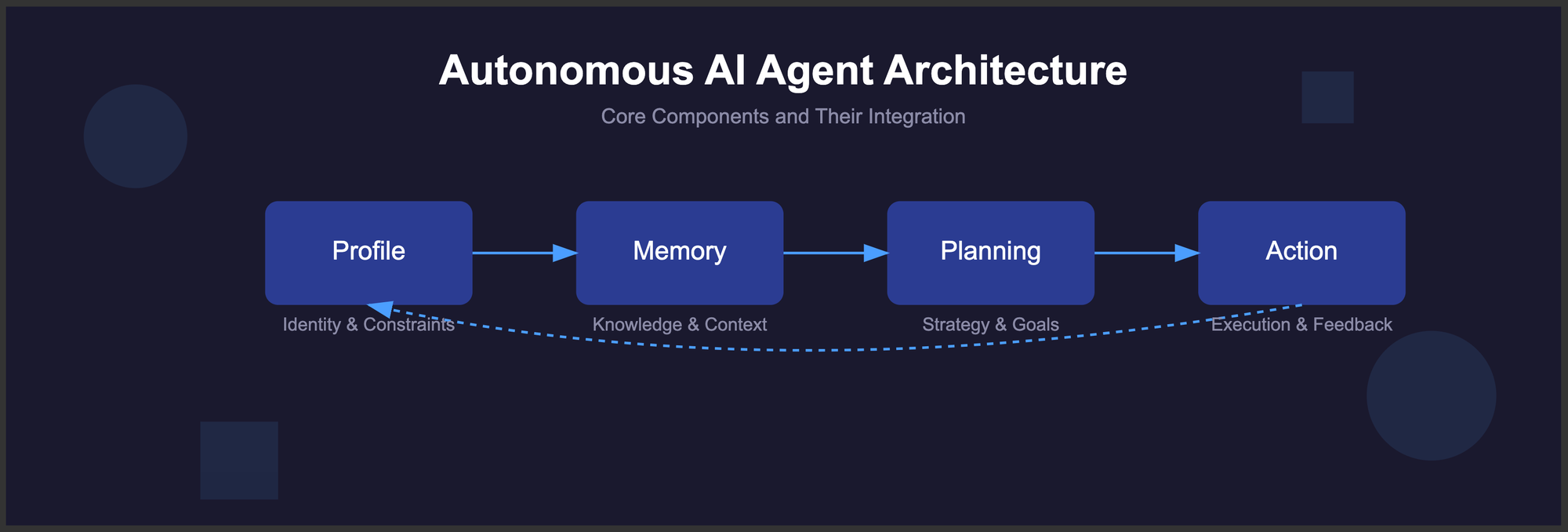

Key Architectural Characteristics:

- High system privileges

- Persistent memory storage

- OAuth and API integrations

- External “skill” marketplace support

- Autonomous command execution capability

These capabilities — designed for productivity — also created an ideal environment for:

- Credential harvesting

- Lateral movement

- Persistent backdoors

- Data exfiltration

CVE-2026-25253: Remote Code Execution at Scale

One of the most severe vulnerabilities exploited was:

CVE-2026-25253 – Remote Code Execution (RCE)

This flaw allowed attackers to:

- Execute arbitrary shell commands

- Deploy malware payloads

- Exfiltrate sensitive tokens

- Modify persistent AI memory states

Because many instances were internet-exposed on default port 18789, exploitation required minimal effort.

A Shodan scan on February 18 identified 312,000+ exposed instances, many without authentication controls.

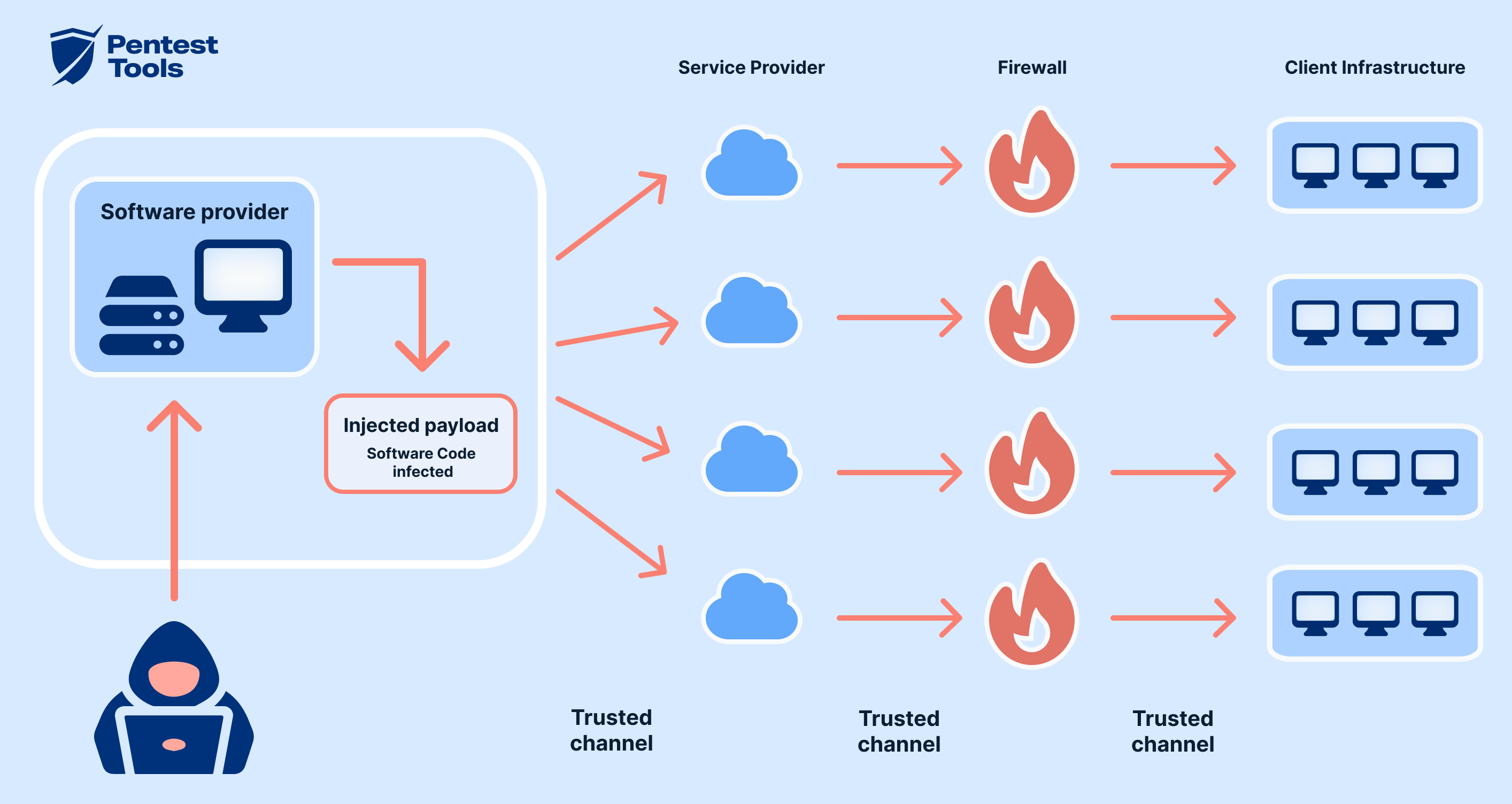

ClawHavoc Campaign: Supply Chain Compromise

4

The first major campaign, ClawHavoc, was detected January 29, 2026.

Attackers used automated deployment infrastructure (e.g., accounts such as “Hightower6eu”) to distribute malicious “setup” scripts disguised as cryptocurrency tooling.

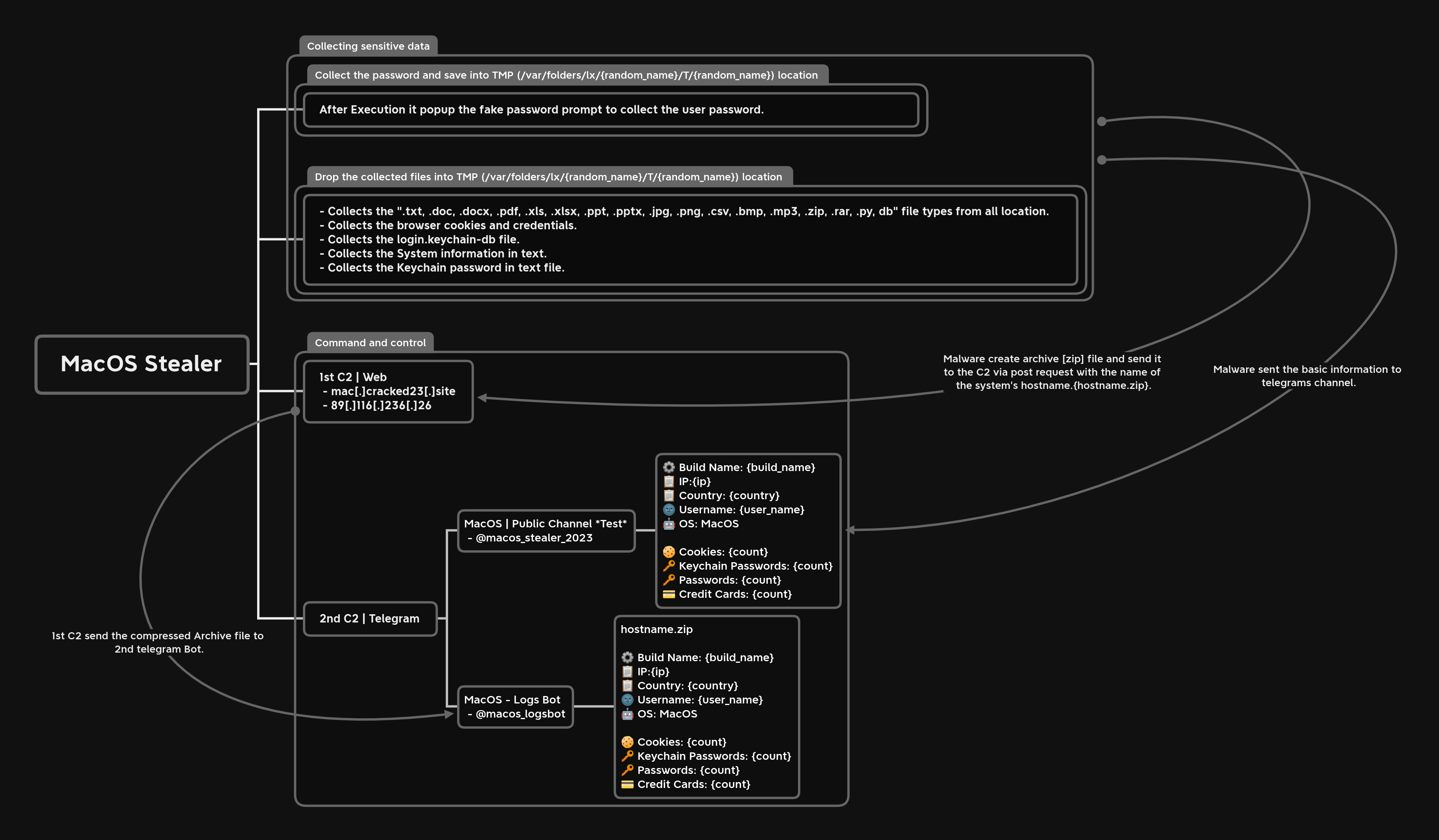

Payloads Included:

- Atomic Stealer (macOS)

- Windows keyloggers

- OAuth token harvesters

- API key interceptors

Once executed, these payloads enabled:

- Persistent memory extraction

- Enterprise lateral movement

- Credential replay attacks

This was a classic supply chain poisoning attack, similar in methodology to SolarWinds-style compromises — but targeted at AI infrastructure.

Automated Skill Poisoning via ClawHub Marketplace

A second campaign exploited OpenClaw’s open publishing model.

Because the “ClawHub” marketplace lacked mandatory code review, attackers uploaded backdoored “skills” from seemingly legitimate GitHub accounts.

Malicious Skill Behavior:

- Executed remote shell commands

- Exfiltrated OAuth tokens in real time

- Harvested stored API credentials

- Created reverse shells

This highlights a growing trend:

AI plugin ecosystems are becoming high-value malware distribution channels.

How Attackers Monetized Compromised OpenClaw Instances

Observed monetization methods included:

- Selling stolen API keys on underground forums

- Intercepting chatbot conversations

- Distributing info-stealer malware via Telegram channels

- Leveraging enterprise tokens for cloud pivoting

Because OpenClaw maintains persistent memory and system access, attackers gained deeper visibility than traditional phishing-based credential theft.

Why Autonomous AI Agents Are Prime Targets

1. Elevated Privileges

AI agents often run with system-level permissions.

2. Broad API Integrations

Connections to:

- GitHub

- Slack

- Cloud providers

- CI/CD pipelines

3. Persistent Memory

Stored secrets and context create a long-term compromise risk.

4. Internet Exposure

Rapid deployment without secure configuration.

Risk Impact Analysis

| Risk Category | Severity |

|---|---|

| Credential Theft | Critical |

| RCE Exploitation | Critical |

| Data Exfiltration | High |

| Supply Chain Compromise | Critical |

| Regulatory Exposure | High |

Organizations operating in regulated sectors (HIPAA, PCI-DSS, GDPR) may face investigation if API keys expose customer data.

MITRE ATT&CK Mapping

The OpenClaw exploitation aligns with:

- Initial Access – Exploiting Public-Facing Application

- Execution – Command and Scripting Interpreter

- Credential Access – Input Capture & OS Credential Dumping

- Persistence – Modify Authentication Process

- Exfiltration – Exfiltration Over C2 Channel

Common Security Mistakes Observed

- Exposing default port 18789 to the internet

- No authentication on admin interfaces

- Lack of API key rotation

- No sandboxing of AI workloads

- Blind trust in marketplace plugins

Defensive Best Practices for AI Frameworks

Immediate Actions

- Patch CVE-2026-25253 immediately

- Restrict public exposure via firewall rules

- Rotate all API keys used by OpenClaw

- Disable untrusted third-party skills

- Enable audit logging

Architectural Controls

- Implement Zero Trust network segmentation

- Use least-privilege IAM roles

- Store secrets in hardened vaults

- Apply runtime application self-protection (RASP)

- Monitor anomalous outbound traffic

Compliance Considerations

Under frameworks such as:

- NIST CSF

- ISO/IEC 27001

- SOC 2

- PCI-DSS

Exposed API keys and unauthorized RCE could constitute reportable security incidents.

AI deployments must now be treated as high-risk assets in risk registers.

Expert Insight: AI Capability vs. AI Security

OpenClaw’s rapid adoption illustrates a recurring pattern:

Innovation outpaces governance.

The framework prioritized capability, extensibility, and autonomy — but lacked:

- Mandatory authentication enforcement

- Secure-by-default configuration

- Marketplace vetting controls

- Built-in anomaly detection

Autonomous agents are not “just tools” — they are privileged digital operators.

FAQs

1. What is the OpenClaw exploitation campaign?

A coordinated effort by multiple hacking groups exploiting vulnerabilities to steal API keys and deploy malware.

2. How many instances were compromised?

Over 30,000 confirmed compromised instances, with 312,000+ exposed publicly.

3. What is CVE-2026-25253?

A critical Remote Code Execution flaw enabling arbitrary shell command execution.

4. How did supply chain poisoning occur?

Malicious “skills” and setup scripts were uploaded to the OpenClaw ecosystem without code review.

5. Are autonomous AI frameworks secure by default?

No. Many prioritize flexibility over hardened security controls.

6. What should companies do immediately?

Patch, isolate, rotate credentials, restrict exposure, and monitor for abnormal API usage.

Conclusion: A Turning Point in AI Security

The OpenClaw exploitation campaigns signal a fundamental shift in cybersecurity:

Autonomous AI agents are now prime attack infrastructure.

Organizations must:

- Treat AI frameworks as privileged assets

- Apply zero trust principles

- Enforce secure-by-design standards

- Conduct AI-specific threat modeling

The future of AI innovation depends on embedding security at the architectural layer — not as an afterthought.